Dual Memory LSTM With Dual Attention Neural Network For Spatiotemporal Prediction

Mar 21, 2022

Contact: joanna.jia@wecistanche.com / WhatsApp: 008618081934791

Abstract

Spatiotemporal prediction is challenging due to extracting representations being inefficient and the lack of rich contextual dependences. A novel approach is proposed for spatiotemporal prediction using a dual memory LSTM with dual attention neural network (DMANet). A new dual memory LSTM (DMLSTM) unit is proposed to extract the representations by leveraging differencing operations between the consecutive images and adopting a dual memory transition mechanism. To make full use of historical representations, a dual attention mechanism is designed to capture long-term spatiotemporal dependences by computing the correlations between the currently hidden representations and the historical hidden representations from temporal and spatial dimensions, respectively. Then, the dual attention is embedded into the DMLSTM unit to construct a DMANet, which enables the model with greater modeling power for short-term dynamics and long-term contextual representations. An apparent resistivity map (AR Map) dataset is proposed in this paper. The B-spline interpolation method is utilized to enhance the AR Map dataset and makes apparent resistivity trend curve continuous derivative in the time dimension. The experimental results demonstrate that the developed method has excellent prediction performance by comparisons with some state-of-the-art methods.

Keywords: spatiotemporal prediction; dual memory LSTM; dual attention; historical representations

1. Introduction

Spatiotemporal prediction is learning representations in an unsupervised manner from unlabeled video data and using them to execute a prediction task, which is a typical computer vision task. Currently, the spatiotemporal prediction has been applied to some tasks successfully, such as future prediction of object locations [1,2], anomaly detection [3], and autonomous driving [4]. Deep learning-based models take a leap over the traditional approaches because they have learned adequate representations from high-dimensional data. Deep learning methods fit perfectly into the spatiotemporal prediction task, which could extract spatiotemporal correlations from video data in a self-supervised fashion. However, spatiotemporal prediction is still a challenging task due to the problem of extracting representations inefficiently and the lack of long-term dependencies. For example, Convolutional LSTM (ConvLSTM) [5] has been developed to further extract temporal representations but it ignores spatial representations. Some methods [6,7] have achieved accurate prediction results, but they cause representation loss. The method of adversarial has been applied in prediction tasks [8,9]. However, they [8,9] are signifificantly dependent on the unstable training process.

1 School of Communication and Information Engineering, Shanghai University, Shanghai 200444, China

2 Key Laboratory of Advanced Display and System Application, Ministry of Education, Shanghai 200072, China

A novel dual memory LSTM with dual attention neural network (DMANet) has been proposed for spatiotemporal prediction in this paper to solve the mentioned problems. A dual memory LSTM (DMLSTM) unit based on ConvLSTM [5] has been developed for DMANet to perform spatiotemporal prediction. It can be applied to get representations of motion by differencing adjacent hidden states or raw images appropriately. Besides, it has dual memory structures to store spatial information and temporal information. A dual attention mechanism is proposed and embedded into the DMLSTM unit to extract long-term feature dependencies from temporal and spatial dimensions, respectively, which enables the developed model to capture longer complex video dynamics. Compared with the above spatiotemporal prediction methods, the main contributions of this paper are as follows. Firstly, a novel DMLSTM unit has been proposed to perform extract representations, which can be applied for spatiotemporal prediction by leveraging differencing operations between the consecutive images and adopting a dual memory transition mechanism. Secondly, a dual attention mechanism is developed to get the long-term frame interactions. The long-term frame interactions are captured by computing the correlation between the currently hidden representations and the historical hidden representations from the temporal and spatial dimensions, respectively. Finally, an important contribution is that the DMANet combines both advantages. Such architectural design enables the model with greater modeling power for short-term dynamics and long-term contextual representations. The proposed method is evaluated at some challenging datasets with different methods. It achieves excellent performance by comparison with some state-of-the-art methods. The experimental results show that the proposed method has excellent spatiotemporal prediction performance.

The rest of this article is organized as follows. Related work is discussed in Section 2. The dual memory LSTM with dual attention mechanism is described in Section 3. Experimental results and analyses are discussed in Section 4 and followed by conclusions in Section 5.

2. Literature Review

Over the past decade, many methods have been proposed for spatiotemporal prediction. Recurrent neural network (RNN) [10] with the long short-term memory (LSTM) [11] has been increasingly applied to prediction tasks due to its capabilities for learning representations of a video sequence. In recent years, the LSTM framework based on a sequence-to-sequence model [12] has been adapted to video prediction. Still, the accuracy of prediction is limited due to the fact that these framework methods [12] only capture temporal variations. In order to further extract video representations, ConvLSTM [5] replaces fully connected operations with convolution operations in recurrent state transitions. A deep-learning-based framework [13] is proposed to reconstruct the missing data to facilitate analysis with spatiotemporal series. However, it will increase the extra computational cost and lower the prediction efficiency. The bijective gated recurrent unit is introduced in [14], which exploits recurrent auto-encoders to predict the next frame in some cases. A multi-output and multi-index of supervised learning [15] method with LSTM [11] are proposed for spatiotemporal prediction, which can model the long-term dynamics. In pursuit of alleviating gradient vanishing, convolutional LSTM extended by [6,7] introduces a zigzag memory flow and gradient highway unit (GHU). An updated deep learning-based method has been used for improving prediction capability. A version of ASAP called the “ASAP deep system”, is proposed in [16]. Optical flow warping and RGB pixel synthesizing algorithms [17] have been exploited to perform spatiotemporal prediction. A memory-in-memory network (MIM) is proposed for the prediction tasks in [18]. Its difference from the above-mentioned recurrent models is that MIM [18] applies to difference in memory transitions to transform the time-varying polynomial into a constant, which enables the deterministic component predictable. However, these methods [14–18] are still challenging to perform long-term prediction since excessive gate transitions would cause the loss of representations.

cistanche deserticola benefits in memory

In addition to the recurrent models, other models are also employed for spatiotemporal prediction. A retrospection network is proposed in [19], which introduces retrospection loss to push the retrospection frames to be consistent with the observed frames. In order to handle the imbalance in the data, a neighborhood cleaning algorithm is developed in [20]. A random forest algorithm extracts the optimal features to perform the prediction task. A variational autoencoder is adopted to extract nonlinear dynamic features in [21]. This model analyzes the correlations between variables and the relationships between historical samples and present samples. A wide-attention module and the deep-composite module are utilized in [22] to extract global key features and local key features. However, these methods [19–22] depend on local representations to some extent, which cannot get excellent performance on prediction tasks. An artificial neural network [23] has been proposed to model the unique properties of spatiotemporal data and derives a more powerful modeling capability to spatiotemporal data. A spatiotemporal prediction system [24] has been developed to focus on spatial modeling and reconstructing the complete Spatio-temporal signal. This method shows the effectiveness of modeling coherent Spatio-temporal fields. The mixed neural network has been proposed to model the dynamic pattern and learn appearance representations based on given video frames in [25]. A 3D CNN is utilized into RNN in [26], which extends representations in temporal dimension and makes the memory unit store better long-term representations. However, convolutional operations [24–26] account for short-range intraframe dependencies due to their limited receptive fields and the lack of explicit inter-frame modeling capabilities. The generative adversarial networks [8] are another approach for spatiotemporal prediction. A conditional variational autoencoder method has been proposed in [9] by producing future human trajectories conditioned on previous observations and future robot actions. The prediction methods [8,9] aim to generate less blurry frames, but their performance signifificantly depends on the unstable training process.

A self-attention mechanism is proposed in [27], which can be applied to capture long-range dependencies and has been proved to be effective in aggregating salient features among all spatial positions in computer vision tasks [28–30]. A double attention block is proposed in [28], which combines the features of the whole space into a compact set, and then adaptively selects and allocates features to each location. In order to exploit the contextual information more effectively, a crisscross network [29] introduced a crisscross attention module to get the contextual information of all pixels, which is helpful for visual understanding problems. In addition, unlike the multi-scale feature fusion methods, a dual attention network [30] is proposed to combine local features with global dependencies adaptively. However, they cannot be used to deal with prediction tasks due to the lack of spatiotemporal dependencies.

In summary, prior prediction models yield different drawbacks. Different from previous work, we design a novel variant of ConvLSTM [5] to store state representations and extend the attention mechanism in the task of spatiotemporal prediction. This architecture captures rich contextual relationships for better feature representations with intra-class compactness.

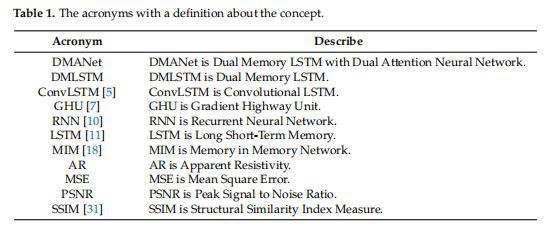

Table 1 shows the acronyms used in the paper with a definition of the concept.

3. DMA Neural Network

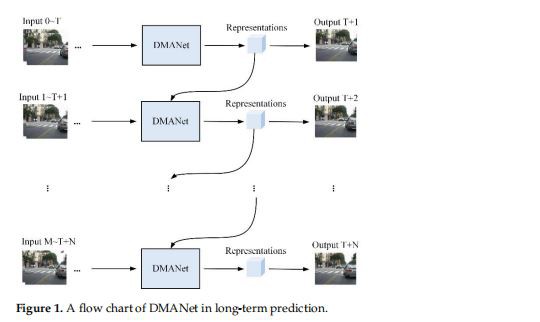

A flow chart of DMANet is shown in Figure 1. The representations are extracted from DMANet given the input frames. The representations indicate prediction results and can be used to predict the next representations.

In this section, the details of the DMANet would be given. Firstly, a novel DMLSTM unit is introduced in Section3.1. Afterward, a dual attention mechanism is proposed in Section3.2, which enables the model can benefit from the previous relevant representations. Finally, they are aggregated together to build DMANet for spatiotemporal prediction, which is detailed in Section 3.3.

3.1.Dual Memory LSTM

It is enlightened by the PredRNN++[7], which adds more nonlinear layers to increase the network depth and strengthen the modeling capability for spatial correlations and temporal dynamics. However, the problem of gradient propagation is becoming more and more difficult with the increase of network depth, even if GHU [7] alleviates it to a limited extent. Some work[6,7,14] does not perform well in extracting the representations of spatiotemporal sequences across excessive gate transitions, as it may inescapably cause the loss of representations. Therefore, long-range spatial dependencies can be captured by stacked convolution layers. However, the effectiveness of the modeling capability for spatiotemporal dynamics is limited due to the complex laver-to-layer transition.

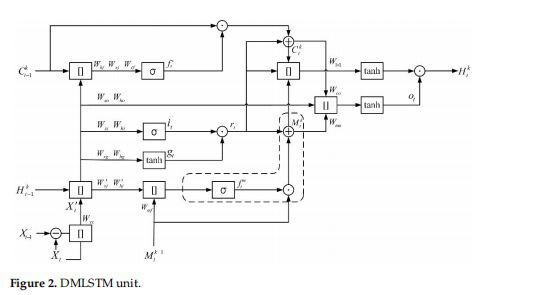

A new recurrent unit named DMLSTM is developed to perform spatiotemporal prediction to overcome the limitations mentioned above, as shown in Figure 2. Firstly, an additional memory unit is added based on ConvLSTM[5]; this unit is used to store spatial states, which enables the unit to learn more spatiotemporal representations. The novel transition mechanism is designed by discarding redundant gate structures, such as input gates. The various nonlinear structure would lose the powerful internal representations in pixel-level prediction. On the other hand, the representations differencing operations have been effectively applied to capture the representations of moving objects. Therefore, differencing can be used for prediction tasks to supplement moving objects' representation details. In the DMLSTM unit, the differencing operation is developed to get representations of motion by differencing adjacent hidden states or raw images, which makes the unit have a more powerful modeling capability for spatiotemporal dynamics.

3.2. Dual Attention Mechanism

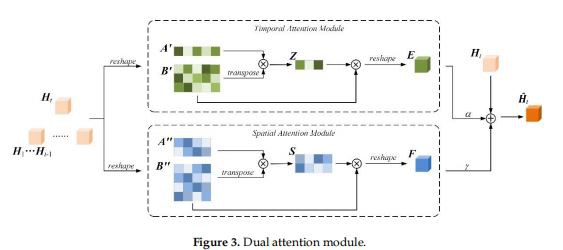

Spatiotemporal prediction can predict future frames by observing previous representations. However, the prediction model should focus more on historical representations that are related to the predicted content. Attention mechanism [27] can capture long-range dependencies between local and global representations in some practical tasks [32,33]. Moreover, spatiotemporal prediction is challenging due to the complex dynamics and appearance changes, which require dependencies on both temporal and spatial domains. A novel variant of the attention mechanism named the dual attention mechanism is proposed. This architecture captures long-term spatiotemporal interaction from temporal and spatial dimensions, respectively, and then the obtained representations are aggregated for future prediction.

cistanche for sale in memory

The dual attention module is shown in Figure 3 including the current timestamp hidden states Ht ∈ RH × W × C and historical ones {H1 . . . Ht−1} ∈ Rn × H × W × C, where H and W are spatial sizes, C is the number of channels, and n denotes the number of hidden representations that are concatenated along the temporal dimension, respectively

4. Conclusions

A DMANet has been proposed for spatiotemporal prediction in this paper. A DML-STM unit is used to efficiently extract the representations by leveraging differencing operations between the consecutive images and adopting a dual memory transition mechanism. A dual attention mechanism is designed to capture long-term spatiotemporal dependences by computing the correlations between the currently hidden representations and the historical hidden representations from temporal and spatial dimensions, respectively. The DMANet combines both the advantages and such architectural design enables the model with greater modeling power for short-term dynamics and long-term contextual representations. The experimental results demonstrate that our method has an excellent performance in spatiotemporal prediction.

where to buy cistanche in memory

Spatiotemporal prediction is a promising avenue for the self-supervised learning of rich spatiotemporal correlations. For future work, we will investigate how to separate the moving objects from the background and put more attention on moving objects., We will also try to build an apparent resistivity nowcasting system to protect Chinese Grottoes from water.

References

1. Yao, Y.; Atkins, E.; Johnson-Roberson, M.; Vasudevan, R.; Du, X. Bitrap: Bi-directional pedestrian trajectory prediction with multi-modal goal estimation. IEEE Robot. Autom. Lett. 2021, 2, 1463–1470. [CrossRef]

2. Song, Z.; Sui, H.; Li, H. A hierarchical object detection method in large-scale optical remote sensing satellite imagery using saliency detection and CNN. Int. J. Remote Sens. 2021, 42, 2827–2847. [CrossRef]

3. Li, Y.; Cai, Y.; Li, J.; Lang, S.; Zhang, X. Spatio-temporal unity networking for video anomaly detection. IEEE Access 2019, 1, 172425–172432. [CrossRef]

4. Yurtsever, E.; Lambert, J.; Carballo, A.; Takeda, K. A survey of autonomous driving: Common practices and emerging technologies. IEEE Access 2020, 8, 58443–58469. [CrossRef]

5. Shi, X.; Chen, Z.; Wang, H.; Yeung, D.Y. Convolutional LSTM network: A machine learning approach for precipitation nowcasting. In Proceedings of the 29th Conference on Neural Information Processing Systems, Montreal, QC, Canada, 7–12 June 2015; pp. 802–810.

6. Wang, Y.; Li, M.; Wang, J.; Gao, Z.; Yu, P. PredRNN: Recurrent neural networks for predictive learning using spatiotemporal LSTMs. In Proceedings of the 31st Conference on Neural Information Processing Systems, Long Beach, BC, Canada, 4–9 December 2017; pp. 879–888.

7. Wang, Y.; Gao, Z.; Long, M.; Wang, J.; Yu, P. PredRNN++: Towards a resolution of the deep-in-time dilemma in spatiotemporal predictive learning. In Proceedings of the 35th International Conference on Machine Learning, Stockholm, Sweden, 10–15 April 2019; pp. 5123–5132.

8. Goodfellow, I.J.; Pouget-Abadie, J.; Mirza, M.; Xu, B.; Warde-Farley, D. Generative adversarial networks. In Proceedings of the 28th Conference on Neural Information Processing Systems, Montreal, QC, Canada, 8–13 December 2014; pp. 2672–2680.

9. Ivanovic, B.; Karen, L.; Edward, S.; Pavone, M. Multimodal deep generative models for trajectory prediction: A conditional variational autoencoder approach. IEEE Robot. Autom. Lett. 2021, 2, 295–302. [CrossRef]

10. Rumelhart, D.; Hinton, G.; Williams, R. Learning representations by back-propagating errors. Nature 1986, 1, 533–536. [CrossRef]

11. Hochreiter, S.; Schmidhuber, J. Long short-term memory. Neural Comput. 1997, 8, 1735–1780. [CrossRef]

12. Sutskever, I.; Vinyals, O.; Le, Q. Sequence to sequence learning with neural networks. In Proceedings of the Advances in Neural Information Processing Systems, Montreal, QC, Canada, 8–13 December 2014; pp. 3104–3112.

13. Das, M.; Ghosh, S. A deep-learning-based forecasting ensemble to predict missing data for remote sensing analysis. IEEE J. Sel. Top. Appl. Earth Observ. Remote Sens. 2017, 12, 5228–5236. [CrossRef]

14. Oliu, M.; Selva, J.; Escalera, S. Folded recurrent neural networks for future video prediction. In Proceedings of the 15th European Conference on Computer Vision, Munich, Germany, 8–14 December 2018; pp. 716–731.

15. Seng, D.; Zhang, Q.; Zhang, X.; Chen, G.; Chen, X. Spatiotemporal prediction of air quality based on LSTM neural network. Alex. Eng. J. 2021, 60, 2021–2032. [CrossRef]

16. Abed, A.; Ramin, Q.; Abed, A. The automated prediction of solar flares from SDO images using deep learning. Adv. Space Res. 2021, 67, 2544–2557. [CrossRef]

17. Li, S.; Fang, J.; Xu, H.; Xue, J. Video frame prediction by deep multi-branch mask network. IEEE Trans. Circuits Syst. Video Technol. 2020, 4, 1–12. [CrossRef]

18. Wang, Y.; Zhang, J.; Zhu, H.; Long, M.; Wang, J.; Yu, P. Memory in memory: A predictive neural network for learning higher-order non-stationarity from spatiotemporal dynamics. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Long Beach, BC, Canada, 16–20 June 2020; pp. 9146–9154.

19. Chen, X.; Xu, C.; Yang, X.; Yang, X.; Tao, D. Long-term video prediction via criticization and retrospection. IEEE Trans. Image Process. 2020, 29, 7090–7103. [CrossRef]

20. Neda, E.; Reza, F. AptaNet as a deep learning approach for aptamer-protein interaction prediction. Sci. Re. 2021, 11, 6074–6093.

21. Shen, B.; Ge, Z. Weighted nonlinear dynamic system for deep extraction of nonlinear dynamic latent variables and industrial application. IEEE Trans. Ind. Inform. 2021, 5, 3090–3098. [CrossRef]

22. Zhou, J.; Dai, H.; Wang, H.; Wang, T. Wide-attention and deep-composite model for traffic flow prediction in transportation cyber-physical systems. IEEE Trans. Ind. Inform. 2021, 17, 3431–3440. [CrossRef]

23. Patil, K.; Deo, M. Basin-scale prediction of sea surface temperature with artificial Neural Networks. J. Atmos. Ocean. Technol. 2018, 7, 1441–1455. [CrossRef]

24. Amato, F.; Guinard, F.; Robert, S.; Kanevski, M. A novel framework for spatio-temporal prediction of environmental data using deep learning. Sci. Rep. 2020, 10, 22243–22254. [CrossRef]

25. Yan, J.; Qin, G.; Zhao, R.; Liang, Y.; Xu, Q. Mixpred: Video prediction beyond optical flow. IEEE Access 2019, 1, 185654–185665. [CrossRef]

26. Wang, Y.; Jiang, L.; Yang, M.; Li, L.; Long, M.; Li, F. Eidetic 3D LSTM: A model for video prediction and beyond. In Proceedings of the International Conference on Learning Representations, New Orleans, LA, USA, 6–9 May 2019; pp. 1–14.

27. Vaswani, A.; Shazier, N.; Parmar, N.; Uszkoreit, J.; Jones, L. Attention is all you need. In Proceedings of the 31st Conference on Neural Information Processing Systems, Long Beach, BC, Canada, 4–9 December 2017; pp. 5998–6008.

28. Chen, Y.; Kalantidis, Y.; Li, J.; Feng, J. A2 nets: Double attention networks. In Proceedings of the 32nd Conference on Neural Information Processing Systems, Montreal, QC, Canada, 2–8 December 2018; pp. 352–361.

29. Huang, Z.; Wang, X.; Wei, Y.; Huang, L.; Shi, H. Ccnet: Criss-cross attention for semantic segmentation. IEEE Trans. Pattern Anal. Mach. Intell. 2020, 1, 1–11. [CrossRef]

30. Fu, J.; Liu, J.; Tian, H.; Li, Y. Dual attention network for scene segmentation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Long Beach, BC, Canada, 16–20 June 2019; pp. 3146–3154.

31. Wang, Z.; Bovik, A.; Sheikh, H. Image quality assessment: From error visibility to structural similarity. IEEE Trans. Image Process. 2004, 4, 600–612. [CrossRef]

32. Liu, Q.; Lu, S.; Lan, L. Yolov3 attention face detector with high accuracy and efficiency. Comp. Syst. Sci. Eng. 2021, 37, 283–295.

33. Li, X.; Xu, F.; Xin, L. Dual attention deep fusion semantic segmentation networks of large-scale satellite remote-sensing images. Int. J. Remote Sens. 2021, 42, 3583–3610. [CrossRef]

34. Srivastava, N.; Mansimov, E.; Salakhutdinov, R. Unsupervised learning of video representations using LSTMs. In Proceedings of the 32nd International Conference on Machine Learning, Lille, France, 6–11 June 2015; pp. 843–852.

35. Geiger, A.; Lenz, P.; Stiller, C.; Urtasun, R. Vision meets robotics: The KITTI dataset. Int. J. Robot. Res. 2013, 32, 1231–1237. [CrossRef]

36. Dollar, P.; Wojek, C.; Schiele, B.; Perona, P. Pedestrian detection: A benchmark. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Miami, FL, USA, 20–25 June 2009; pp. 304–311.

37. Liu, J.; Jin, B.; Yang, J.; Xu, L. Sea surface temperature prediction using cubic B-spline interpolation and spatiotemporal attention mechanism. Remote Sens. Lett. 2021, 12, 12478–12487. [CrossRef]