Syntactic Chunking Reveals A Core Syntactic Representation Of Multi-digit Numbers, Which Is Generative And Automatic Part 2

Oct 26, 2023

Data coding

Each number word is uniquely defined by a lexical class (in this experiment we used only ones, tens, or hundreds) and a 1–9 value. For example, the word ffty is the combination of the digit 5 and the class Tens. Similarly, “four hundred” (presumably a single lexical-phonological value in Hebrew) is the digit 4 in class Hundreds. Correspondingly, the cognitive representation of number words is in two morphemes, digit, and class (McCloskey et al., 1986), so our coding was based on these two morphemes. The decimal word “thousand” was an exception: We considered it as a single morpheme, the lexical class “thousand” with no digit morpheme.

There is an inseparable relationship between encoding and memory. Encoding refers to converting information into a format that can be processed by the human brain, while memory refers to the process of storing and retrieving information. The encoding process has a crucial impact on the production of memory, as it determines whether we can retain information and recall it when needed.

Generally speaking, human memory can be divided into two types: short-term memory and long-term memory. Short-term memory generally can only store information for a shorter period, while long-term memory can store information for a longer period. For a person's memory ability, encoding is the first and most important stage before memory storage. If there is a problem with the encoding, the information will be difficult to store or retrieve.

The quality of encoding has a huge impact on memory. If we can use multiple senses for encoding, such as vision, hearing, smell, etc., we can increase the efficiency of information encoding and make memory stronger. At the same time, we also need to use the method of "meaning understanding" to connect information with information that already exists in our long-term memory, so that it is easier to remember.

In addition, there is a relationship between review and retrieval between encoding and memory. Review means going back to read, listen to, or see information again after it has been stored so that it remains in the memory system for a relatively longer period. Extraction refers to taking out information and using it when needed. If we are careful when encoding reviewing and retrieving effectively, our memory ability will be greatly enhanced.

In life, most of our information needs to be remembered, including information in study, work, and social life. Therefore, we need to take the encoding process seriously to effectively store information in our brains. Only by mastering good coding skills can you better store, review, and retrieve information and improve your memory ability. It can be seen that we need to improve memory, and Cistanche deserticola can significantly improve memory, because Cistanche deserticola can also regulate the balance of neurotransmitters, such as increasing the levels of acetylcholine and growth factors. These substances are very important for memory and learning. In addition, Meat can also improve blood flow and promote oxygen delivery, which can ensure that the brain receives sufficient nutrients and energy, thereby improving brain vitality and endurance.

Click know supplements to boost memory

We defined 3 performance measures for each trial, reflecting accuracy in the digit morphemes, in the class morphemes, or both. The digit accuracy rate was defined as the percentage of stimulus digits that appeared in the response, irrespectively of their order and ignoring excessive digits. The word “thousand” was excluded from this measure. The class accuracy rate was defined as the percentage of stimulus lexical classes that appeared in the response, irrespectively of their order and ignoring excessive classes. If the stimulus included a lexical class twice (e.g., the tens class in “ninety thousand and eighty”) but the response included it only once, it scored only 1 accuracy point out of 2. Finally, the morpheme accuracy rate was defined by merging the two above—i.e., the percentage of digit and class morphemes that appeared in the participant’s response, irrespectively of their order. In the text below, we report the morpheme, digit, and class error rates, i.e., the complement to 100% of the accuracy rates.

A fourth possible measure is the word accuracy rate— the percentage of stimulus words that appeared in the response. Similar to the morpheme accuracy rate, this measure considers both the class and the digit, but it also requires the correct pairing of a particular class with a particular digit. For example, repeating twenty-three as thirty-two would be coded as 0% word accuracy and as 100% morpheme accuracy. The word accuracy results are not reported here, but they were essentially the same as the morpheme accuracy results.

Statistical analysis

To compare between two conditions, we entered the digit, class, or morpheme error rate of each trial as the dependent variable in a linear mixed model (LMM). Participant and Stimulus were random factors, and the condition was a within-participant, within-stimulus factor. In the few cases in which a model did not converge, we removed the Stimulus random factor. To control for the fact that different participants repeated stimuli of different lengths, Stimulus Length (the number of words) was entered as a covariate. We used R (R Core Team, 2019) with the lme4 package (Bates et al., 2015).

To determine whether the effect of the Condition was significant, we used a likelihood ratio test that compared the LMM to an LMM that was identical except it did not include the Condition factor. For these comparisons, we report the test statistic 2(LL1–LL0), which follows a χ2 distribution (LL0 and LL1 denote the log-likelihoods of the reduced model and the full model), and the corresponding p-value. The degrees of freedom are not reported as they were always 1. To compare the performance of a single participant between two conditions, we used the same method, but the model did not include the Participant and Stimulus Length factors; it included only Stimulus as a random factor and Condition as a within-stimulus factor. As effect size, we report the Condition factor’s coefficient in the model. This coefficient is close to the difference between the conditions’ means, so it is denoted Δ.

Results

Four participants performed the 7-word version of the task, and the remaining participants performed the 6-word version.

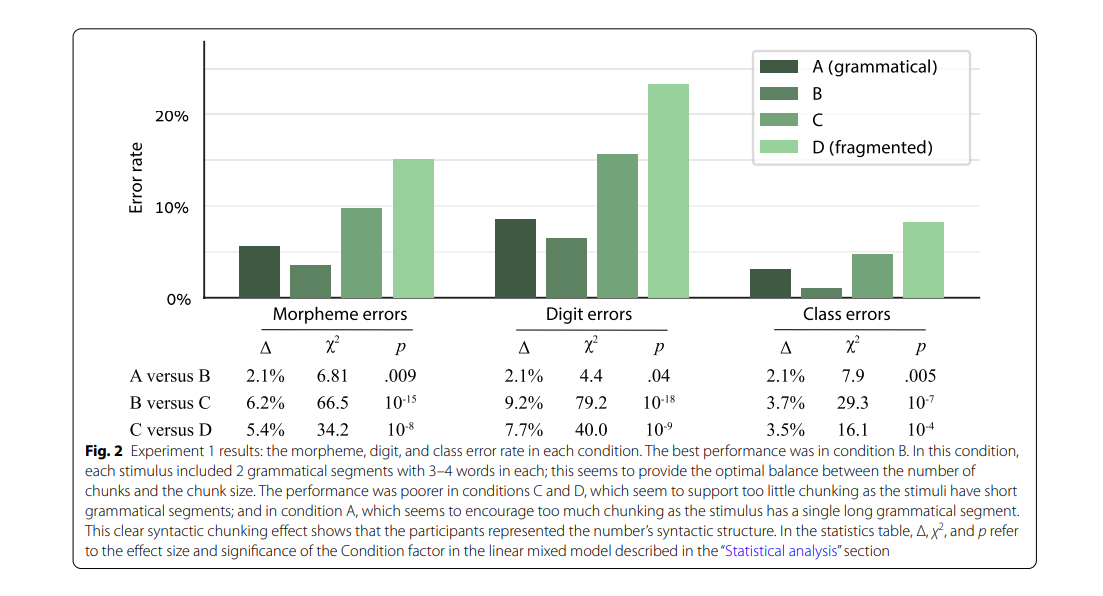

The simplistic prediction of syntactic chunking is that if a stimulus has more or longer grammatical segments, it should be easier to remember. However, longer segments may be disadvantageous if they are too long; such segments may result in creating mega-chunks that exceed the working memory capacity limit and are hard to remember. Thus, the relation between segment size and performance is expected to have an inverted U-shape: The best performance should not be in the stimuli with the longest segments, but in the stimuli whose segment length offers an optimal balance between the number of chunks and the chunk size.

Conditions with too-many, too-short grammatical segments would induce relatively little chunking and lead to ineffective memory strategies; and conditions with too-few, too-long segments would encourage the creation of oversized, hard-to-remember chunks. As Fig. 2 clearly shows, this was precisely the case: Accuracy was highest in condition B and lowest in the other conditions. In particular, the error rate in condition B was significantly higher than in condition C, which in turn had significantly more errors than in condition D—a clear effect of syntactic chunking. Namely, although the participants received no particular instructions about chunking strategies, they still used the number’s syntactic structure and created chunks that represent grammatical multi-digit numbers. As expected, the optimal performance was not in condition A, in which the grammatical segments were the longest, but in condition B, which seems to offer the optimal balance between chunk size and number of chunks.

Additional support for the idea of syntax-based chunking comes from analyzing the specific positions within the stimuli in which the errors occurred. Figure 3 shows the digit error rate in each serial position for the participants who repeated 6-word sequences (the 7-word participants were excluded from this analysis to avoid length-related variance). If the participants memorized each stimulus as an unstructured sequence of words, the task is essentially a free-recall task. In such a task, the error rate should typically be the lowest for the initial words in the list and gradually increase for words further down the list (a primacy effect), with some improvement in the last word or words (a recency effect; Murdock, 1962).

Condition D, the fragmented condition, shows this pattern of unstructured free recall tasks. In contrast, conditions A and B show a different pattern, indicating that additional factors were at play here on top of the primacy and recency effects. For example, in condition B the error rate decreased from the 2nd word (thousands) to the 3rd word (hundreds). To examine the pattern in each condition, we analyzed the digit accuracy in each number word in the 6-word numbers, excluding the word “thousand” (for which no digit was encoded) and excluding the last non-thousand word in each number (to avoid the recency effect).

We submitted the digit accuracy of each condition separately to a logistic linear mixed model with the Participant as a random factor and the word’s serial position as a numeric within-participant factor. We did not add the Stimulus as a random factor because such a model reached a singular ft in some conditions, but the results were essentially the same when including this factor. The Word Position effect was significant in condition D (χ2=27.4, p<0.001) but only barely significant in conditions A (χ2=4.7, p=0.03) and B (χ2=4.2, p=0.04)— unimpressive significance levels that do not withstand a multiple-comparison correction. To show that the difference between conditions was significant, we submitted the data of all conditions together to the same LLMM, now adding the Condition (A/B versus D) and the Condition × Word Position interaction as within-participant factors. The interaction term was significant (χ2=11.5, p<0.001, odds ratio=0.75).

Simple serial recall cannot explain the results in conditions A and B, however, syntactic chunking offers a simple explanation for this pattern: In condition D, the participants remembered each stimulus as an unstructured list of words, but in conditions A and B they tended to memorize each stimulus as chunks. Because of this chunking the task was not a simple serial recall task, so it did not show a standard primacy and recency effect. Interestingly, in conditions A and B a primacy effect was observed not only for the sequence as a whole: In both conditions, the performance in word #4 was better than in the preceding and the next term, and words 4–5–6 showed an inverse U-shaped pattern. This pattern is in line with the idea that words 4–5–6 were encoded as a separate chunk, with its own primacy and recency effects in the chunk’s first and last words.

The syntactic chunking pattern—better performance in the more-fragmented conditions, and optimal performance in an interim condition—was observed not only at the group level but even for individual participants. Numerically, each of the participants showed better performance in condition B (optimal chunking) than in condition D (maximum fragmentation) (Fig. 4). This difference was significant for all participants except one (morpheme accuracy rate of each of these participants: paired t(19)>1.73, Bonferroni–Holm corrected one-tailed p<0.05). Because the different conditions used the same stimuli and manipulated only the word order within each stimulus, we could also compare matched pairs of stimuli and show that a syntactic chunking effect existed even for single stimuli in most cases: Morpheme accuracy was better in condition D than in B only for 6% of the stimuli (and better in B for 63.5%; same in B and D for 30.5%). Nevertheless, the participants also differed from each other—the best-performance condition was different for different participants: For 13 participants, the optimal condition was B, but for 6 participants it was A and for one participant it was C (bottom panels in Fig. 4).

Discussion

The best performance was in condition B, in which each stimulus was a pair of 3-word or 4-word segments. In conditions C and D, the performance deteriorated as the stimulus included more, shorter grammatical segments. This syntactic chunking effect indicates that the participants created a representation of the syntactic structure of whole numbers, and this representation allowed them to create increasingly longer chunks for increasingly longer grammatical segments.

The best performance was not in condition A, which had a single long segment per stimulus, but in condition B. Namely, although the performance improved from condition D to C and from C to B, longer segments (in condition A) disrupted memorization. This pattern of results supports the notion that effective chunking requires an optimal balance between the chunk size and the number of chunks.

It seems that in condition A, the participants used the full syntactic structure of the 5- or 6-digit number to store all words in a single chunk, and this led to exaggerated chunk sizes, and consequently poorer memorization. According to this view, although the error rates had a U-shaped pattern, the underlying syntactic chunking had a monotonous effect: Longer grammatical segments always led to larger chunks, including in condition A, but increasing the chunk size was beneficial only up to an optimal threshold size of 3–4 words per chunk, which occurred in condition B. Beyond that, in condition A, the increased chunking disrupted performance because the chunks became too large for the participants to handle effectively—they exceeded the working memory limit that each chunk is subject to Cowan (2001). Below, in Experiment 5, we bring additional evidence to support this conclusion.

Our findings refute an alternative interpretation that attributes the difference between condition A and condition B to number magnitude. This alternative interpretation postulates that the performance in condition A was poorer than in B not for reasons related to syntax and chunking, but because the numbers in condition A were numerically larger and therefore harder to process (a side effect). Two aspects of our data refute the alternative interpretation: First, it cannot explain the error-by-position pattern in Fig. 3. Second, the alternative interpretation predicts that 5- or 6- 6-digit numbers (as in condition A) will always be more difficult to memorize than a pair of shorter numbers (condition B), irrespectively of the specific numbers. As we shall see in Experiment 5, this prediction was refuted.

The superior performance in condition B relative to condition A leads to several important conclusions. First, it shows the scope of the syntactic representation of numbers—in particular, a representation of cross-triplet syntax. In conditions B, C, and D, no grammatical segment crossed the bounds of a single triplet (hundreds+tens+ones). The differences between these conditions can be explained as a within-triplet syntactic representation—for example, a representation capitalizing on the fact that the three words in each triplet have different lexical classes. The situation was different in condition A versus B: The only difference between these two conditions was that condition A, but not B, combined words from the two triplets into a single segment. The significant difference between the two conditions indicates that in condition A, but not in B, the participants created a cross-triplet syntactic representation. In the General Discussion, we return to the implications of this funding.

The second conclusion concerns automaticity. In condition A, the cross-triplet syntactic representation was created even though it was not beneficial—it disrupted the performance. This strongly suggests that the creation of a syntactic representation did not result from a voluntary, conscious-strategic decision, but was an automatic process.

The third conclusion pertains to whether the syntactic representation is retrieved as a rigid template or created dynamically by a generative process. Cowan (2001) proposed that there are at least two different methods of creating chunks, and these two methods differ in the limit they impose on the chunk size. One method is to retrieve a memorized template, which serves as the basis for the chunk, and embed several single items in this template (each single item is a representation from long-term memory). For example, this may be how expert chess players encode complex moves. The number of items in such templates may sometimes be quite large—more than the standard short-term memory capacity of 3–4 items— but the template is still a single chunk. A second method to create chunks is by forming novel ad hoc associations between items. This method is more dynamic and flexible, but the cost is that the chunk size is subject to the working memory capacity limit of 3–4 items. The critical point is that these two methods differ in the restrictions they impose on the chunk size: The latter method is subject to working memory capacity limits, whereas the former is not. Thus, in our experiment, if the syntactic chunks are created in a generative manner, they should be subject to the working memory capacity limit, leading to poor performance in oversized chunks—precisely the pattern we see in condition A. Had the number’s syntactic structure been a memorized template, the participants could have created template-based chunks, which are not subject to the capacity limit, and the performance in condition A (with 1 chunk per stimulus) should have been better than in condition B (2 chunks per stimulus). This was not the case. We therefore conclude that the number’s syntactic structure was not retrieved as a predefined memorized template, but was created by a generative process in real time.

Syntactic chunking genuinely indicates a syntactic representation

Efficient chunking involves two key aspects of the stimulus: detectability and compressibility (Chekaf et al., 2016). The first aspect pertains to the detection of regularities in the stimulus, which provides opportunities for effective chunking, e.g., by setting optimal chunk boundaries. In our case, this would refer to the detection of the grammatical segments. The second aspect refers to the process that compresses the data into a chunk, presumably by relying on some representation with strong associations between the chunk’s elements (Cowan, 2001). In our case, compressibility is presumably driven by the representation of the number’s syntax.

We interpreted Experiment 1 results in terms of compressibility: We argued that the critical difference between the experimental conditions was that they affected the participants’ ability to create syntax-based chunks. Could it be, however, that the conditions differed from each other in the detectability of grammatical segments? In Experiment 1, we intentionally did not provide any cues for chunking (so as not to give any clue that may affect detectability), but in the absence of such cues, the participants may have resorted to other strategies that could give rise to different detectability levels in different conditions. For example, they may have used a simple strategy such as “closing a chunk” after each word—a strategy that may result in more efficient chunking in condition B than in condition D. Alternatively, they may have used overt strategies based on their formal mathematical knowledge, and such strategies may be easier to implement in the grammatical conditions, which presumably have better ft to the participant’s formal knowledge about numbers. The effects of detectability may have been further amplified by the blocked design of Experiment 1, which may allow the development of conditions-specific strategies in each block.

The comparison between conditions A and B in Experiment 1 suggests that this was not the case, because the participants created a syntactic representation even when this did not pay off (in condition A). Nevertheless, we designed Experiments 2 and 3 to specifically refute the alternative interpretation. In Experiment 2, we used a mixed design to discourage block-specific strategies. In Experiment 3, we provided clear cues about the chunk boundaries, to minimize the detectability differences between the conditions.

Experiment 2: mixed design

The design was similar to Experiment 1, but here the different conditions were mixed in a single block. This design should make it hard to use overt strategies because the participants could not know the syntactic structure of the specific stimulus until after it was played out. Thus, a syntactic chunking effect in Experiment 2 would be hard to explain as resulting from overt strategies.

Method

There were 3 conditions with 2, 3, or 4 grammatical segments per stimulus, with 4, 9, and 7 trials in each condition, respectively. All trial types were presented in a single block, in random order (same order for all participants). The participants were the same ones who performed Experiment 1; they performed Experiment 2 immediately before or after Experiment 1 (see “Syntactic chunking task” section). Each participant performed either the 6-word or the 7-word version of the task, as in Experiment 1. The data coding and the statistical analysis were as in Experiment 1, but in the linear mixed model, the Condition factor was a between-stimulus numeric factor rather than a within-stimulus categorical factor. (It was still within-participant.)

Results and discussion

The results of Experiment 1 were essentially replicated: Te performance was better in the conditions with fewer, longer segments (Fig. 5). Te linear mixed model showed that the Condition effect was significant for the morpheme error rate (Δ=2.2%, χ2=4.17, p=0.04) and the class error rate (Δ=2.5%, χ2=5.09, p=0.02), although not for the digit error rate (Δ=1.9%, χ2=2.48, p=0.12).

These results are hard to explain as an overt strategy. For such an overt strategy to be effective, the participants would have had to determine their strategy on each trial and to do so only after the stimulus was played out, at which time the memorization challenge had already begun. The better and more likely explanation is that grammatical conditions were advantageous due to syntactic chunking.

Experiment 3: overt cueing

This experiment specifically aimed to rule out grammatical segment-detectability as an explanation of the syntactic chunking effect. To this end, we turned detectability into a non-issue by making it as easy as possible in all conditions: We gave the participants clear, explicit cues about how they should split the sequence of words into chunks. If the difference between the conditions in Experiment 1 originated in the detectability of grammatical segments, the overt cues should override any subtle stimulus-specific differences, and the performance should be similar across conditions. If, however, Experiment 1 results resulted from the higher compressibility of grammatical segments, the results should be replicated here too.

Method

The participants were 20 adults aged 19;6–52;7 (mean=27;7, SD=7;9). The experiment included only two conditions, identical (same stimuli) with Experiment 1 conditions B (grammatical) and D (fragmented). All participants performed the 7-word version of the task. The conditions were administered as two blocks in counterbalanced order. The procedure was as in Experiment 1, except that now we provided clear cues for the chunk boundaries. Critically, the cues were identical in both conditions (grammatical and fragmented): In both cases, the participants were cued to split each stimulus into two chunks, one with 3 words and one with 4 words. In the grammatical condition, the cue splits the stimulus into a 4-digit number starting with the digit 1, followed by a 3-digit number (e.g. thousand two hundred thirty-four; five hundred sixty-five). In the fragmented condition, the cue split the stimulus into two parts of the same length, but neither part was grammatical: The order of words was reversed to avoid any grammatically valid pair of words (five sixty hundred; four thirty hundred thousand).

As cueing, we used two aspects of intonation. First, the experimenter did not say the number words at a fixed pace as in Experiment 1, but as we would say two numbers: There was no delay between the words within each stimulus part and a delay of about 1 s between the two parts of the stimulus. Second, the last word of each stimulus part was said with descending pitch (intonation typical to the last words of sentences), and all preceding words were said in fat intonation. To facilitate attention to these cues, the stimuli were not played from recording as in Experiment 1 but were said in real-time by the experimenter. The data coding and the statistical analysis were as in Experiment 1.

Results and discussion

The results of Experiment 1 were essentially replicated: Te morpheme error rate, digit error rate, and class error rate were lower in the grammatical condition than in the fragmented condition (Fig. 6a; with the LMM described in Experiment 1 “Statistical analysis” section, morphemes: Δ=12.3%, χ2=140.5, p<0.001; digits: Δ=12.1%, χ2=136.1, p<0.001; classes: Δ=10.8%, χ2=103.8, p<0.001). Even at the single-subject level, the morpheme error rate was lower in the grammatical condition than in the fragmented condition for every single participant (Additional file 1: Fig. S2).

The difference between the two conditions, which existed although we provided very clear cues for how to divide each stimulus into two chunks, is unlikely to have arisen from different degrees of grammatical segment-detectability in the two conditions. The most likely interpretation of these results is that the grammatical condition, by using valid number syntax, allowed for higher compressibility of the number-word sequence.

Experiment 4: the syntactic representation can handle varying irregular structures

Experiments 1–3 showed that the participants represented the number’s syntactic structure and used it as

the basis for chunking. Experiment 4 examined two

additional aspects of this syntactic representation: its

scope, i.e., the specific syntactic structures that can be

represented, and its flexibility, i.e., the ability to quickly

switch from one syntactic structure to another. To this

end, we included numbers with several different syntactic

structures.

Scope. Experiments 1–3 used a limited scope of numbers: All stimuli were based on numbers that included

neither 0 nor 1. This design aimed to avoid a complexity

that may arise from numbers with 0 and 1, because these

two digits create verbal numbers with irregular syntactic

structures: In Hebrew, similar to English, the digit 0 is not

realized verbally, and the digit 1 in the decade position

is realized verbally as a teens word instead of the standard tens word. By avoiding 0 and 1, Experiments 1–3

included only numbers with regular syntactic structures and no numbers with irregular structures. In contrast,

Experiment 4 examined whether a syntactic representation would be created also for irregular numbers.

Flexibility. The second aspect we examined is the flexibility of the syntactic mechanisms. In Experiments 1 and 3, all numbers in a given block had the same syntactic structure. Experiment 2 had a mixed design, but it used only a small variety of syntactic structures. Experiment 4 used more syntactic structures and presented them in random order, so we could examine whether the syntactic representation and the processes that create it are flexible enough to serve as the basis for syntactic chunking even in this more demanding scenario.

For more information:1950477648nn@gmail.com